←

Back to Blog

AI

•

•

Team PixelPilot

•

3 min read

Responsible AI: Bias and Fairness

Run quantitative bias audits, carry out targeted fairness tests, and record concrete mitigation steps and outcomes to tr

Introduction

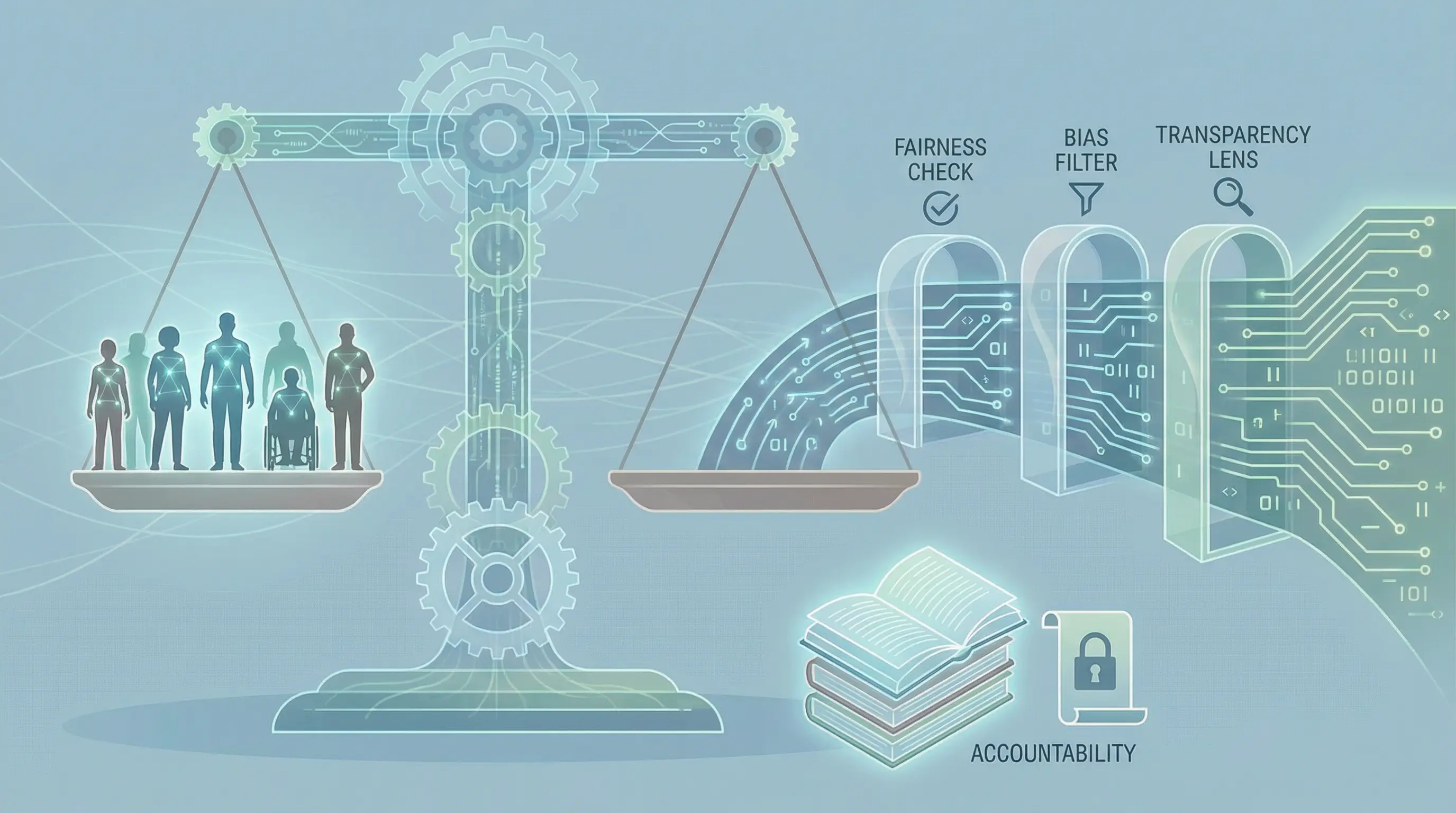

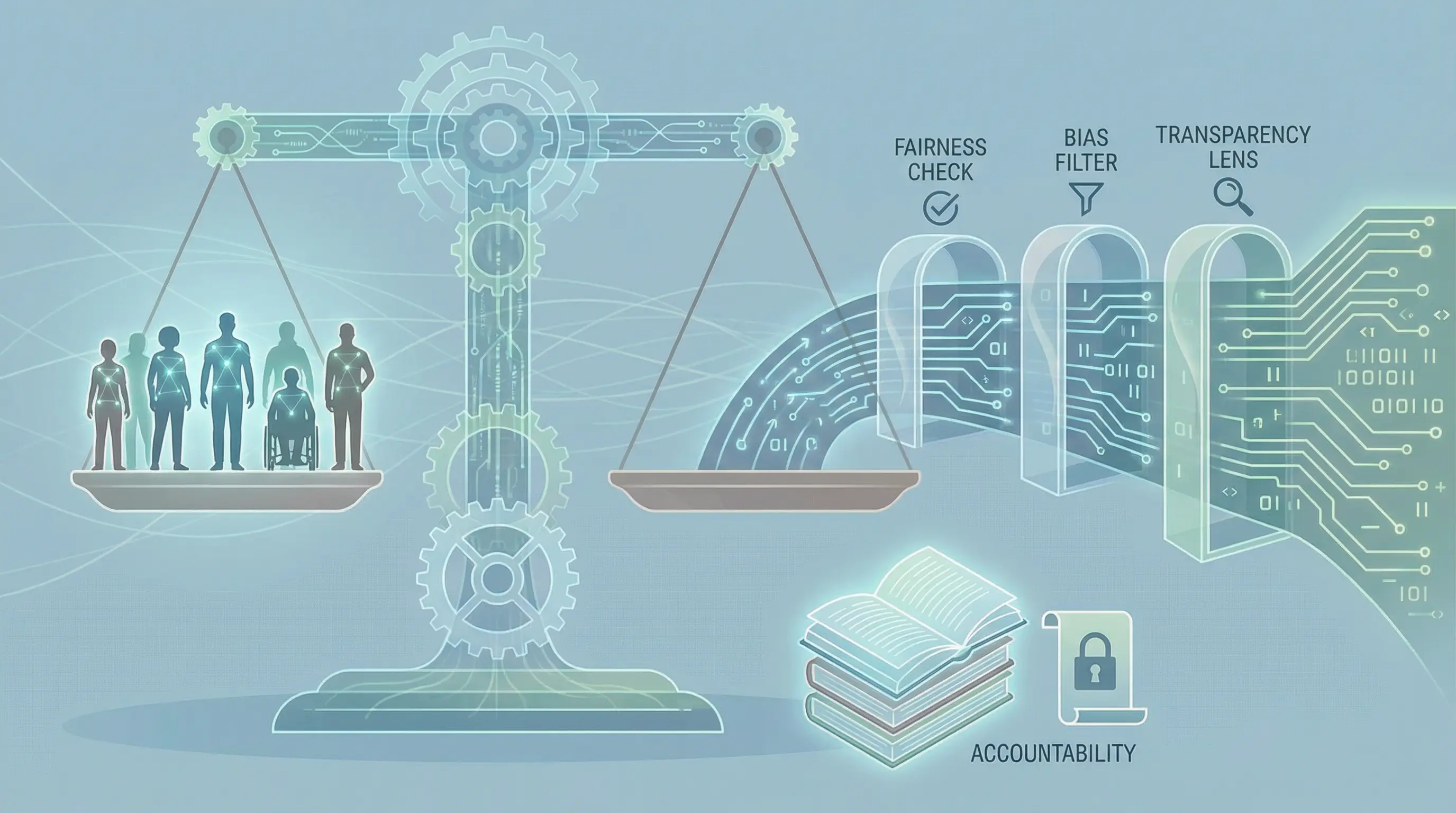

Artificial intelligence is increasingly used to make decisions in areas such as hiring, lending, healthcare, and content moderation. While AI can improve efficiency and decision-making, it can also introduce unintended bias. Responsible AI ensures that systems are fair, transparent, and accountable, promoting trust and reducing harm.

Understanding Bias in AI

Bias in AI occurs when a model produces outcomes that systematically favor or disadvantage certain groups. Bias can arise from several sources:

Training data: If the data reflects historical inequalities, the model may reproduce them

Algorithm design: Certain modeling choices may unintentionally amplify bias

Feature selection: Using proxies that correlate with sensitive attributes can introduce unfairness

Recognizing these sources of bias is the first step toward building fair AI systems.

The Importance of Fairness

Fairness means treating individuals and groups equitably, avoiding unjust discrimination. AI systems that are biased can cause reputational damage, legal issues, and harm to affected individuals. Ensuring fairness not only protects users but also supports regulatory compliance and ethical business practices.

Techniques to Mitigate Bias

Data Auditing and Preprocessing

Carefully examining training data for imbalances or harmful patterns is essential. Techniques such as resampling, reweighting, or anonymization can reduce bias before model training.

Algorithmic Fairness Methods

Some algorithms include fairness constraints to ensure that outcomes do not disproportionately affect certain groups. Regular testing of models for fairness metrics helps detect issues before deployment.

Continuous Monitoring

Bias can emerge over time as data and environments change. Monitoring AI systems in production allows organizations to detect and address bias dynamically.

Transparent and Explainable AI

Making AI decisions understandable helps stakeholders identify potential bias and ensures accountability. Explainable AI techniques clarify why a model made a specific decision, making it easier to spot unfair patterns.

Organizational Practices

Building responsible AI is not only a technical challenge. Organizations should establish governance frameworks, define ethical guidelines, and involve diverse teams in development. Documenting decisions, maintaining audit trails, and engaging stakeholders promotes accountability and trust.

Benefits of Responsible AI

Responsible AI builds credibility with users and stakeholders, reduces the risk of legal and regulatory penalties, and fosters inclusive innovation. Fair and transparent AI systems encourage adoption while ensuring that technology serves all people equitably.

Challenges and Considerations

Mitigating bias is complex and ongoing. Challenges include incomplete or unrepresentative data, conflicting fairness definitions, and balancing fairness with accuracy. Organizations must commit to continuous learning, evaluation, and adjustment to maintain responsible AI practices.

Conclusion

Bias and fairness are central concerns in responsible AI. By auditing data, applying fairness techniques, monitoring models, and ensuring transparency, organizations can reduce bias and create AI systems that are ethical, reliable, and equitable.

Responsible AI is not only a technical necessity but a societal and business imperative, ensuring that artificial intelligence benefits everyone fairly and responsibly.

Need help with your digital project?

Our team builds websites, mobile apps, e-commerce platforms and runs data-driven marketing campaigns for businesses across the UK.