←

Back to Blog

Cybersecurity

•

•

Team PixelPilot

•

4 min read

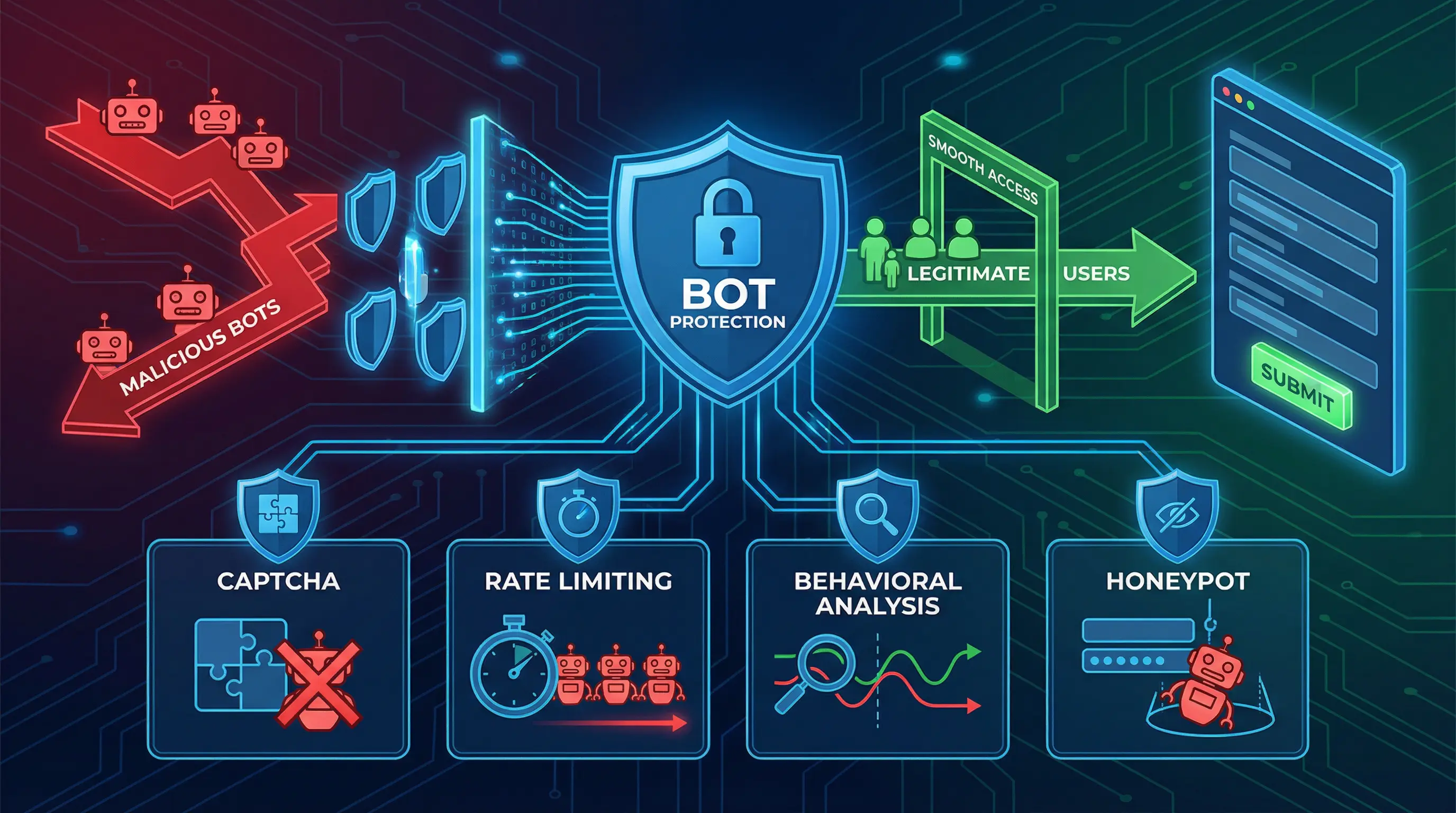

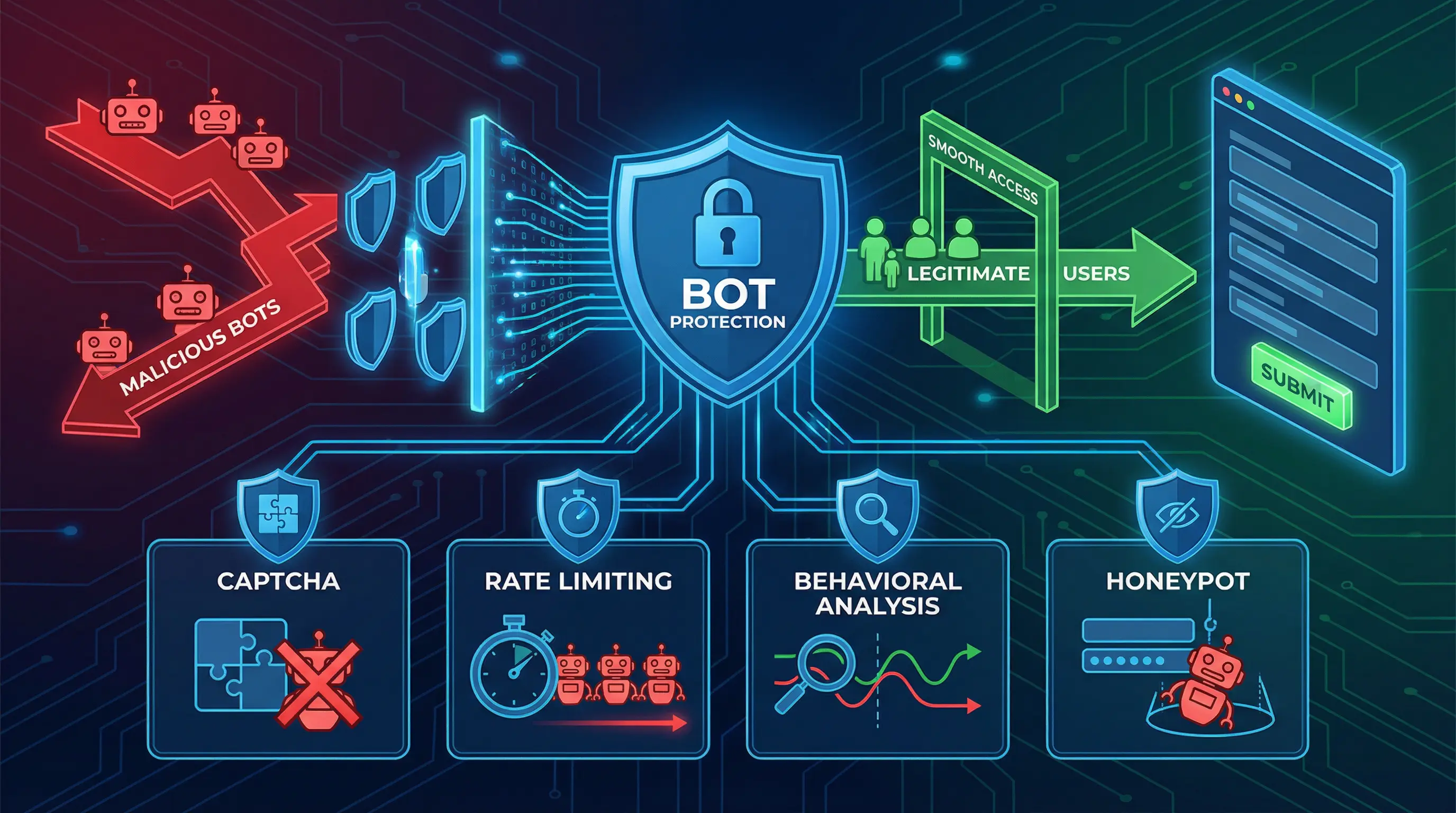

Bot Protection on Forms

Keep form endpoints safe from automated abuse with layered defenses—rate limits, CAPTCHAs, and targeted verification tha

Introduction

Forms are essential for websites and applications—they handle sign-ups, lead generation, surveys, payments, and support requests. However, forms are also a primary target for bots, which can submit fake entries, scrape data, or attempt attacks like credential stuffing and spam campaigns.

Implementing bot protection on forms is crucial for security, data integrity, and user experience. Effective protection balances blocking malicious activity while allowing legitimate users to interact seamlessly.

Understanding the Threat

Types of Form-Based Attacks

Spam Submissions – Bots submit irrelevant or malicious content to overload systems or promote unwanted links

Credential Stuffing – Automated login attempts using stolen credentials

Data Scraping – Bots extract sensitive data from forms or hidden fields

Denial of Service (DoS) – High-volume automated submissions can disrupt services

Injection Attacks – Malicious inputs intended to exploit backend systems, e.g., SQL injection

Business Impact

Increased operational costs due to data cleanup and mitigation

False analytics and skewed insights

Decreased user trust if bots compromise accounts or data

Potential regulatory and compliance risks

Strategies for Bot Protection

1. CAPTCHA and reCAPTCHA

CAPTCHA challenges differentiate humans from bots using puzzles or image selection

Google reCAPTCHA v3 uses behavioral scoring without explicit user interaction

Pros: Widely used, effective against simple bots

Cons: Can disrupt user experience if overly aggressive

2. Honeypots

Invisible fields added to forms that only bots fill

Legitimate users don’t see or interact with these fields

Submissions with filled honeypot fields are flagged as spam

Pros: Seamless user experience

Cons: Less effective against advanced bots

3. Rate Limiting and Throttling

Limit the number of submissions per IP or session

Detect suspicious patterns like repeated rapid submissions

Pros: Reduces high-volume attacks

Cons: May require exception handling for legitimate high-traffic users

4. JavaScript and Behavioral Analysis

Detect bots by analyzing mouse movements, keystrokes, and navigation patterns

Bots often behave mechanically, allowing identification

Pros: Transparent to users, difficult for bots to mimic

Cons: Requires more advanced implementation and monitoring

5. IP Reputation and Blacklists

Block submissions from known malicious IP addresses or ranges

Integrate third-party threat intelligence services for updated blacklists

Pros: Prevents known threats proactively

Cons: May block legitimate users in shared or dynamic IP environments

6. Token-Based Verification

Use CSRF tokens or unique session tokens per form submission

Ensures each submission originates from the legitimate website session

Pros: Protects against automated script submissions

Cons: Needs careful implementation to avoid breaking functionality

Best Practices

Combine Multiple Techniques – Use CAPTCHAs, honeypots, and behavioral analysis together for layered protection

Monitor and Analyze Submissions – Regularly check form activity for unusual patterns or spikes

Optimize for UX – Avoid overly aggressive challenges that frustrate real users

Secure Back-End Validation – Validate input server-side, even if client-side protections exist

Update and Iterate – Adjust strategies as bots evolve and new attack methods emerge

Implementation Example

A typical secure form workflow:

User opens the form

Client-side validation checks required fields

Behavioral analysis and honeypot verification occur in the background

CAPTCHA triggered if suspicious activity detected

Submission sent with a unique token to backend

Server validates input, token, and IP before processing

This layered approach ensures both security and usability.

Business Benefits

Reduces spam and fraudulent submissions, saving time and operational costs

Protects sensitive user data from scraping and automated attacks

Maintains analytics accuracy, leading to better decision-making

Enhances user trust by safeguarding interactions without excessive friction

Supports compliance with data privacy regulations

Challenges and Considerations

Balancing security and UX—too many challenges can frustrate users

Advanced bots can bypass basic protections, requiring continuous monitoring

Integration complexity with multiple forms, third-party tools, or single-page applications

Accessibility—ensure CAPTCHA or behavioral methods are accessible to all users

Conclusion

Bot protection on forms is a critical component of modern web security. By combining CAPTCHAs, honeypots, behavioral analysis, rate limiting, and token verification, organizations can prevent automated attacks while maintaining a smooth user experience.

A layered, proactive approach ensures forms remain secure, trustworthy, and functional, protecting both business data and customer interactions while minimizing operational overhead.

Need help with your digital project?

Our team builds websites, mobile apps, e-commerce platforms and runs data-driven marketing campaigns for businesses across the UK.