←

Back to Blog

AI

•

•

Team PixelPilot

•

5 min read

A/B Testing with AI Features

Plan A/B experiments for AI features: choose causal metrics, control rollouts, and quantify treatment lift with clear, m

Introduction

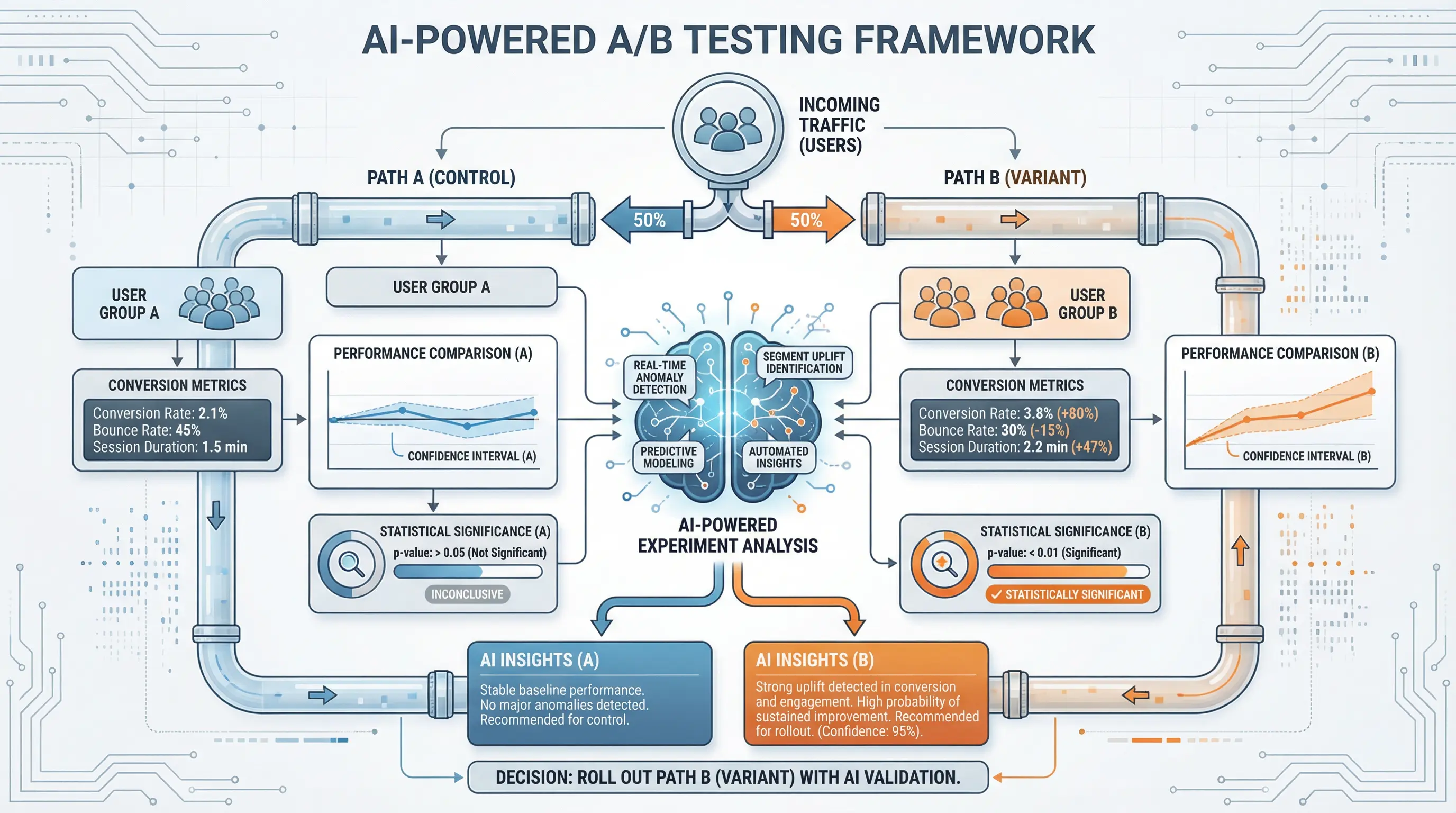

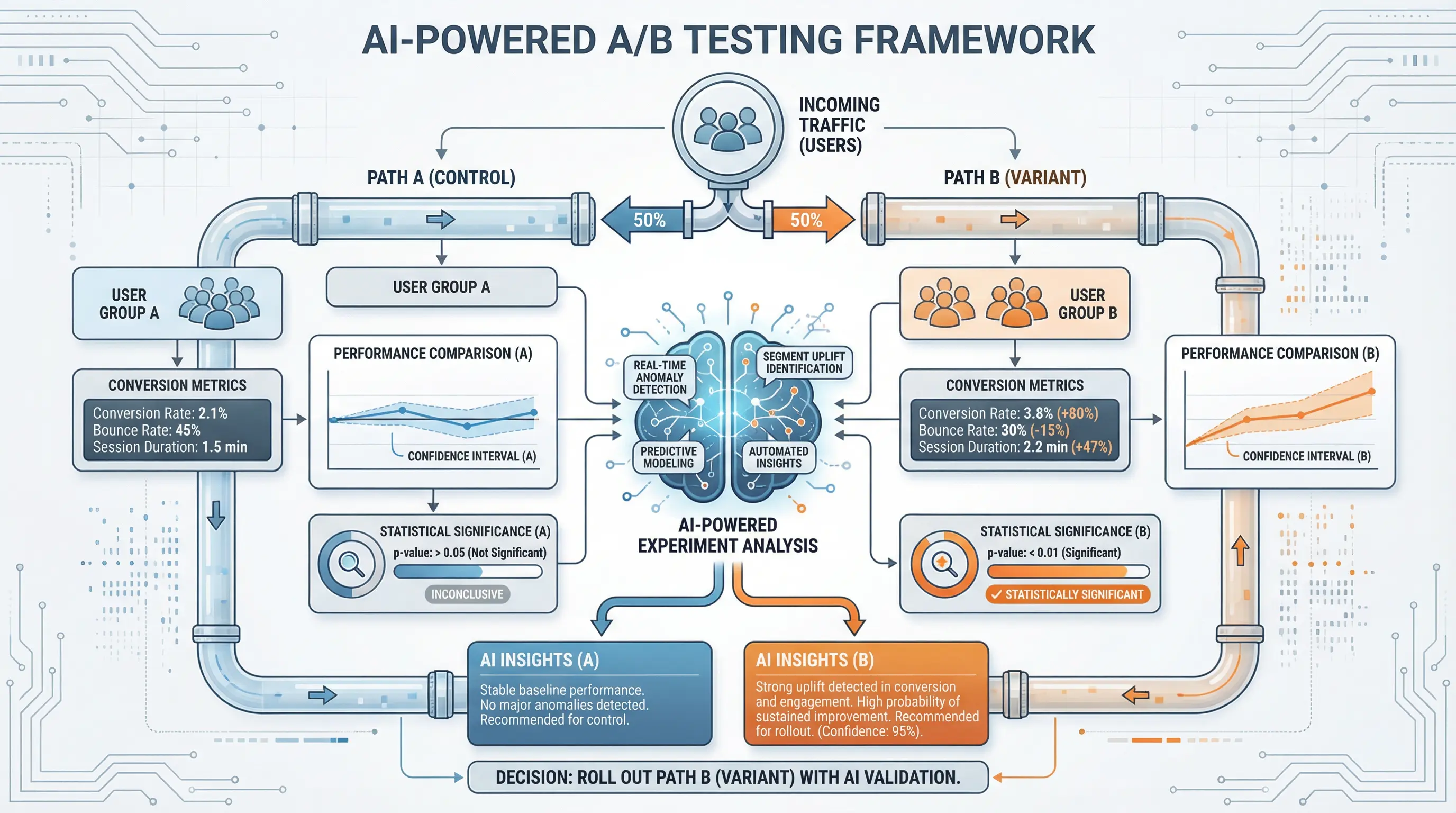

A/B testing is a fundamental method for optimizing digital products and marketing strategies, allowing teams to compare two versions of a webpage, app feature, or campaign element to determine which performs better.

In 2026, as AI features become increasingly integrated into applications—such as recommendation engines, chatbots, predictive analytics, and personalization algorithms—traditional A/B testing alone may not be sufficient. AI introduces dynamic, data-driven behaviors that require advanced experimentation strategies to measure impact effectively.

This article explores how to conduct A/B testing when AI features are involved, best practices, challenges, and practical guidance for actionable results.

Understanding AI Features in A/B Testing

What Makes AI Features Different

AI-driven features differ from static elements in several ways:

Dynamic Outputs – AI features generate personalized content or predictions that change per user

Continuous Learning – Some AI systems adapt over time, meaning results may evolve during the experiment

Complex Metrics – Success may involve multiple outcomes, such as engagement, revenue, or retention

Interdependencies – AI predictions may interact with other features or user behaviors, making isolation challenging

Implications for A/B Testing

Traditional A/B frameworks assume fixed behavior, whereas AI requires careful design to avoid confounding factors

Testing must account for personalization, model drift, and interaction effects

Designing A/B Tests for AI Features

1. Define Clear Objectives

Establish measurable goals specific to the AI feature:

Increased click-through rates (CTR) for recommendations

Improved conversion from AI-powered forms or chatbots

Higher engagement or session duration with AI content personalization

2. Choose the Right Experiment Type

Classic A/B Test – Compare two groups: AI-enabled vs. control

Multi-Armed Bandit – Dynamically allocates traffic to better-performing variants, suitable for adaptive AI models

Personalized or Segment-Based Tests – Evaluate AI performance for different user segments separately

3. Randomization and Isolation

Randomly assign users to control and AI test groups

Ensure consistent AI exposure for each user to avoid data contamination

Consider stratified sampling for heterogeneous audiences

4. Duration and Sample Size

AI features may require larger sample sizes due to variability in output

Ensure the test runs long enough to capture meaningful user interactions and behaviors

Monitor model drift over time to ensure results reflect true AI performance

Metrics and Measurement

Choosing the Right Metrics

Primary Metrics – Core business outcomes such as revenue, conversion, or retention

Secondary Metrics – Engagement, session length, or feature adoption

AI-Specific Metrics – Prediction accuracy, recommendation click-through, or model confidence scores

Considerations for Analysis

Use statistical significance and confidence intervals to validate results

Apply multi-metric evaluation to capture holistic impact

Monitor distributional effects across segments, as AI may improve outcomes for some users while having neutral or negative effects for others

Best Practices for A/B Testing AI Features

1. Test Early and Iteratively

Start with small-scale experiments to validate assumptions and model behavior

Iterate on AI model parameters or training data to improve performance

2. Use Shadow Mode Testing

Run AI features in shadow mode alongside control, without impacting user experience

Compare predicted outcomes with actual outcomes to measure impact before full deployment

3. Combine Experimentation and Analytics

Integrate AI performance logs with A/B test results

Use insights to refine both the model and user experience

4. Avoid Bias in Testing

Ensure randomization is fair and represents all user groups

Avoid exposing the AI model to only certain demographics, which could skew results

5. Document Everything

Record model versions, parameters, datasets, and test setup

Maintain clear documentation for reproducibility and auditability

Challenges and Considerations

Model Drift – AI models may adapt during testing, complicating interpretation

Interdependent Features – AI outputs may influence user behavior in multiple ways, making attribution tricky

Data Privacy – Ensure testing complies with GDPR, CCPA, and other regulations

Complex Metrics – Some AI features require multiple layers of measurement to evaluate effectively

Infrastructure Needs – Real-time AI experimentation may require advanced analytics and logging systems

Real-World Use Cases

E-Commerce Recommendations – Test AI-powered product recommendations vs. manual or rule-based suggestions

Chatbots and Conversational AI – Evaluate AI-assisted support against standard scripted interactions

Content Personalization – Compare personalized news feeds or marketing emails to generic versions

Pricing and Offers – Experiment with AI-driven dynamic pricing vs. static pricing

Fraud Detection – Test AI models for anomaly detection in payments, monitoring false positives and user impact

Business Benefits

Data-Driven Decision Making – Quantifies the real-world impact of AI features

Optimized AI Performance – Iterative testing refines models for better results

Higher Conversion and Engagement – Identifies which AI outputs truly resonate with users

Risk Mitigation – Detects negative user impacts before wide deployment

Scalable Experimentation – Enables organizations to deploy AI features safely and confidently

Conclusion

A/B testing with AI features requires careful planning, robust metrics, and adaptive methodologies. Unlike static features, AI introduces dynamic behavior, personalization, and learning, which must be considered when designing experiments.

By combining clear objectives, proper randomization, shadow mode testing, iterative refinement, and comprehensive measurement, organizations can maximize the impact of AI-driven features while minimizing risks.

AI-enabled A/B testing not only validates performance but also accelerates innovation, ensuring that digital products deliver tangible business value and superior user experiences in a data-driven world.

Need help with your digital project?

Our team builds websites, mobile apps, e-commerce platforms and runs data-driven marketing campaigns for businesses across the UK.